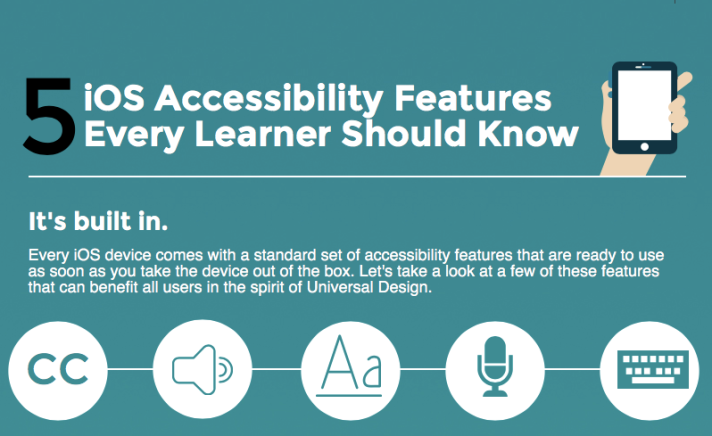

On the occasion of Global Accessibility Day (GAAD), Apple has created a series of videos highlighting the many ways its iOS devices empower individuals with disabilities to accomplish a variety of goals, from parenting to releasing a new album for a rock band. Each of the videos ends with the tagline “Defined for” followed by the name of the person starring in the video, closing with “Designed for Everyone.” In this brief post, I want to highlight some of the ways in which this is in fact true. Beyond the more specialized features highlighted in the video (a speech generating app, the VoiceOver screen reader, Made for iPhone hearing aids and Switch Control), there are many other Apple accessibility features that can help everyone, not just people with disabilities:

- Invert Colors: found under Accessibility > Display Accommodations, this feature was originally intended for people with low vision who need a higher contrast display. However, the higher contrast Invert Colors provides can be helpful in a variety of other situations. One that comes to mind is trying to read on a touch screen while outdoors in bright lighting. The increased contrast provided by Invert Colors can make the text stand out more from the washed out display in that kind of scenario.

- Zoom: this is another feature that was originally designed for people with low vision, but it can also be a great tool for teaching. You can use Zoom to not only make the content easier to read for the person “in the last row” in any kind of large space, but also to highlight important information. I often will Zoom In (see what I did there, it’s the title of one of my books) on a specific app or control while delivering technology instruction live or on a video tutorial or webinar. Another use is for hide and reveal activities, where you first zoom into the prompt, give students some “thinking time” and then slide to reveal the part of the screen with the answer.

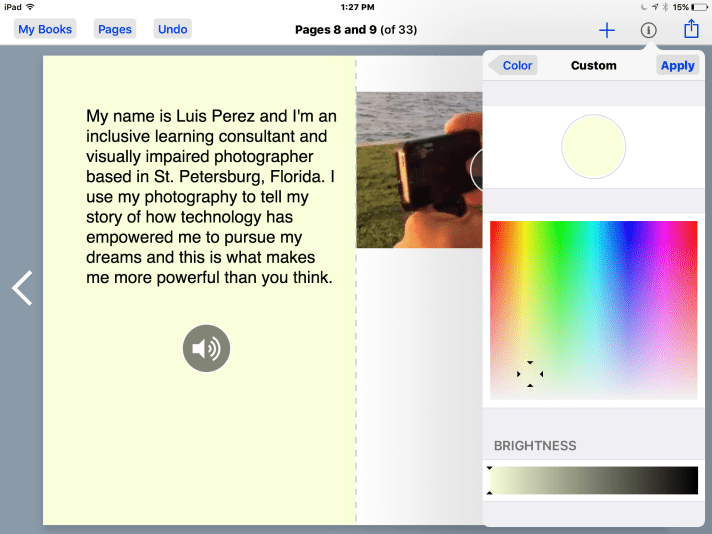

- Magnifier: need to read the microscopic serial number on a new device, or the expiration name on that medicine you bought years ago and are not sure is still safe to take? No problem, Magnifier (new in iOS 10) to the rescue. A triple-click of the Home button will bring up an interface familiar to anyone who has taken a photo on an iOS device. Using the full resolution of the camera, you can not only zoom into the desired text, but also apply a color filter and even freeze the image for a better look.

- Closed Captions: although originally developed to support the Deaf and hard of hearing communities, closed captions are probably the best example of universal design on iOS. Closed captions can also help individuals who speak English as a second language, as well as those who are learning how to read (by providing the reinforcement of hearing as well as seeing the words for true multimodal learning). They can also help make the information accessible in any kind of loud environment (a busy lobby, airport, bar or restaurant) where consuming the content has to be done without the benefit of the audio. Finally, closed captions can help when the audio quality is low due to the age of the film, or when the speaker has a thick accent. On Apple TV, there is an option to automatically rewind the video a few seconds and temporarily turn on the closed captions for the audio you just missed. Just say “what did he/she say?” into the Apple TV remote.

- Speak Screen: this feature found under Accessibility > Speech are meant to help people with vision or reading difficulties, but the convenience it provides can help in any situation where looking at the screen is not possible – one good example is while driving. You can open up a news article in your favorite app that supports Speak Screen while at a stop light, then perform the special gesture (a two finger swipe from the top of the screen) to hear that story read aloud while you drive. At the next stop light, you can perform the gesture again and in this way catch up with all the news while on your way to work! On the Mac, you can even save the output from the text to speech feature as an audio file. One way you could use this audio is to record instructions for any activity that requires you to perform steps in sequence – your own coach in your pocket, if you will!

- AssistiveTouch: you don’t need to have a motor difficulty to use AssistiveTouch. Just having your device locked into a protective case can pose a problem this feature can solve. With AssistiveTouch, you can bring up onscreen options for buttons that are difficult to reach due to the design of the case or stand. With a case I use for video capture (the iOgrapher) AssistiveTouch is actually required by design. To ensure light doesn’t leak into the lens the designers of this great case covered up the sleep/wake button. The only way to lock the iPad screen after you are done filming is to select the “lock screen” option in AssistiveTouch. Finally, AssistiveTouch can be helpful with older phones with a failing Home button.

While all of these features are featured in the Accessibility area of Settings, they are really “designed for everyone.” Sometimes the problem is not your own physical or cognitive limitations, but constraints imposed by the environment or the situation in which the technology use takes place.

How about you? Are there any other ways you are using the accessibility features to make your life easier even if you don’t have a disability?