At today’s World Wide Developer’s Conference (WWDC) Apple announced IOS 6 with a number of accessibility enhancements. I am not a developer (yet!) so I don’t have a copy of the OS to check out, so this post is primarily about what I read on the Apple website and on social media. A few of these features (word highlighting for speak selection, dictionary enhancements, custom alerts) were tucked away in a single slide Scott Forstall showed, with little additional information on the Apple website. So far, these are the big features announced today:

- Guided Access: for children with autism, this feature will make it easier to stay on task. Guided Access enables a single app mode where the home button can be disabled, so an app is not closed by mistake. In addition, this feature will make it possible to disable touch in certain areas of an app’s interface (navigation, settings button, etc.). This feature could be used to remove some distractions, and to simplify the interface and make an app easier to learn and use for people with cognitive disabilities. Disabling an area of the interface is pretty easy: draw around it with a finger and it will figure out which controls you mean. I loved how Scott Forstall pointed out the other applications of this technology for museums and other education settings (testing), a great example of how inclusive design is for more than just people with disabilities.

- VoiceOver integrated with AssistiveTouch: many people have multiple disabilities, and having this integration between two already excellent accessibility features will make it easier for these individuals to work with their computers by providing an option that addresses multiple needs at once. I work with a wounded veteran who is missing most of one hand, has limited use of the other, and is completely blind. I can’t wait to try out these features together with him.

- VoiceOver integrated with Zoom: people with low vision have had to choose between Zoom and VoiceOver. With IOS 6, we won’t have to make that choice. We will have two features to help us make the most of the vision we have: zoom to magnify and VoiceOver to hear content read aloud and rest our vision.

- VoiceOver integrated with Maps: The VoiceOver integration with Maps should provide another tool for providing even greater independence for people who are blind, by making it easier for us to navigate our environment.

- Siri’s ability to launch apps: this feature makes Siri even more useful for VoiceOver users, who now have two ways to open an app, using touch or with their voice.

- Custom vibration patterns for alerts: brings the same feature that has been available on the iPhone for phone calls to other alerts. Great for keeping people with hearing disabilities informed of what’s happening on their devices (Twitter and Facebook notifications, etc.).

- FaceTime over 3G: this will make video chat even more available to people with hearing disabilities.

- New Made for iPhone hearing aids: Apple will work with hearing aid manufacturers to introduce new hearing aids with high-quality audio and long battery life.

- Dictionary improvements: for those of us who work with English language learners, IOS 6 will support Spanish, French and German dictionaries. There will also be an option to create a personal dictionary in iCloud to store your own vocabulary words.

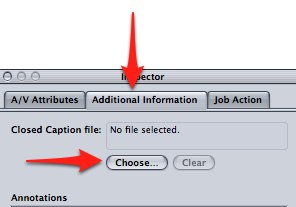

- Word highlights in speak selection: the ability to highlight the words as they are spoken aloud by text to speech benefits many students with learning disabilities. Speak selection (introduced in IOS 5) now has the same capabilities as many third party apps in IOS 6.

These are the big features that were announced, but there were some small touches that are just as important. One of these is the deep integration of Facebook into IOS. Facebook is one of those apps I love and hate at the same time. I love the amount of social integration it provides for me and other people with disabilities, but I hate how often the interface changes and how difficult it is to figure it out with VoiceOver each time an update takes place. My hope is that Apple’s excellent support for accessibility in built-in apps will extend to the new Facebook integration, providing a more accessible alternative to the Facebook app which will continue to support our social inclusion into mainstream society. You can even use Siri to post a Facebook update.

Aside from the new features I mentioned above, I believe the most important accessibility feature shown today is not a built-in feature or an app, but the entire app ecosystem. It is that app ecosystem that has resulted in apps such as AriadneGPS and Toca Boca, both featured in today’s keynote. The built-in features, while great, can only go so far in meeting the diverse needs of people with disabilities, so apps are essential to ensure that accessibility is implemented in a way that is flexible and customized as much as possible to each person. My hope is that Apple’s focus on accessibility apps today will encourage even more developers to focus on this market.

Another great accessibility feature that often gets ignored is the ease with which IOS can be updated to take advantage of new features such as Guided Access and the new VoiceOver integration. As Scott Forstall showed on chart during the keynote, only about 7% of Android users have upgraded to version 4.0, compared to 80% for IOS 5. What that means is that almost every IOS user out there is taking advantage of AssistiveTouch and Speak Selection, but only a very small group of Android users are taking advantage of the accessibility features in the latest version of Android.

Big props to Apple for all the work they have done to include accessibility in their products, but more importantly for continuing to show people with disabilities in a positive light. I loved seeing a blind person in the last keynote video for Siri. At this keynote, Apple showed another blind person “taking on an adventure” by navigating the woods near his house independently. As a person with a visual disability myself, I found that inspiring. I salute the team at Apple for continuing to make people with disabilities more visible to the mainstream tech world, and for continuing to support innovation through inclusive design (both internally and through its developer community).