As many of you who follow me online know, I am very passionate about photography and the possibilities it presents to us as people with disabilities to tell our own stories and exercise our creativity. I love to use my iPhone to take photos because it incorporates so many accessibility features that help me with my photography, such as Zoom, VoiceOver and Invert Colors. However, I am always looking for new options to expand my photographic horizons and the Go Pro action camera is one such option that has fascinated me for some time. I believe that just because you have a disability it doesn’t mean you should not be able to ski, sky dive, or do anything else you set your mind to doing, and the Go Pro has become the go-to camera when it comes to action sports and an active lifestyle.

I started out with the least expensive option in the GoPro lineup, the new entry-level Hero which retails for $129. However, after about a week with the camera, I returned it and opted for the older Hero 3 white model, which is think is a better fit for my needs. The new entry-level Hero has a number of shortcomings due to its low price and limited feature set. However, if you’re an educator looking for an inexpensive camera for recording classroom activities (science experiments, plays and performances, etc) this is a nice camera and there are ways to get around its limitations:

- it does not have an LCD screen for framing shots and adjusting camera settings like the more expensive Go Pro 4 Silver. I don’t think this is a significant drawback, since use of the LCD screen outdoors would be difficult anyway due to glare. The camera’s wide field of view makes it likely that you will capture the shot you want even when you can’t frame it with a viewfinder. For someone who has tunnel vision, the wide FOV is actually one of the things that made the Go Pro so attractive to me. Go Pro does sell an add-on LCD screen but I’m not sure if it is supported on the new Hero. Regardless, using the add-on screen will probably lead to reduced battery life.

- it does not support Wifi connectivity. With other Go Pro cameras (like the Hero 3 White I eventually traded up to), you can set up a Wifi connection between the camera and a smartphone to control the camera and see what you are capturing. However, as with the addition of an add-on LCD screen, a drawback to Wifi connectivity is that it drains the battery much faster.

- it has a built-in battery that cannot be replaced or swapped out to extend the length of time the camera can be used in the field. A workaround for this is to use any of a number of smartphone or tablet external batteries that matches the needs of the Go Pro (5V and 1-1.5 amps). The external battery will allow you to capture longer time lapse photos where the camera has to be turned on for extended periods of time. I was also fortunate to find an old power adapter for a Kodak zi8 camera that allows me to plug in the Go Pro to a wall outlet to charge it much faster than through the USB port on a computer.

- it does not support the higher resolutions of more expensive Go Pro cameras. The Hero tops out at 1080p (30 fps) and also supports 720p at 30 or 60 fps. It does not support 4K, which is fine by me as the higher resolutions result in huge files I can’t possibly store or process on my Macbook Air.

Despite its limitations, I still think the new Go Pro Hero is a nice entry level camera for use in educational settings. It provides access to the many possibilities for using this type of camera to support learning (examples of which are featured on this website by Lisa Tossey) but at a very reasonable price. However, from an accessibility perspective, the biggest problem is not the lack of an LCD viewfinder with features such as large text or a high contrast mode. Rather it is the fact that there is not an option to set up spoken feedback other than a series of beeps as you advance through the various menu screens which are displayed in a small window on the front of the camera. If the camera had Wifi connectivity I could probably use VoiceOver and other accessibility features on my iPhone to get better access to the camera menus and settings.

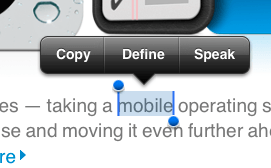

This possibility convinced me to exchange the Hero for the older Hero 3 White, which does support Wifi. I was able to download the free Go Pro app from the App Store and it has some VoiceOver compatibility. I’m convinced that with a few tweaks this app could be made very accessible to the blind. For the most part the buttons have VoiceOver labels that can be spoken aloud to a blind user, but these labels could be improved so that they are clearer and easier to understand when read aloud. For example, I don’t need to hear the following when I choose the option for reviewing the clips on my camera: GoPro app, list view icon, cam roll. Just describing it as Camera Roll would be sufficient. Surprisingly, the shutter release button is the only button or control with no label at all (it just says “button”). In any case, through the Wifi connection I will still be able to use Zoom and other accessibility features on my iPhone even if the app does not have great VoiceOver support.

With the Hero 3 White I lose the following features which are only available in the current generation cameras: Quick Capture, Super Wide Capture and Auto Low Light. Quick Capture allows the capture of video with a single tap of the top button and time lapse with an extended press, while Super Wide extends the FOV slightly and Auto Low Light gives the camera better dynamic range in low light situations. Of these three features only Super Wide would be significantly helpful to me. I don’t shoot in the kinds of environments where Auto Low Light would come in handy (due to my difficulties with navigating low-light environments) and Quick Capture is a nice-to-have but not an essential feature.

The Hero 3 White also has a slightly lower top frame rate for photos, topping out at 3fps as compared to 5fps for the new Hero, as well as a smaller capacity battery. However, I can compensate for the smaller capacity battery by purchasing a number of inexpensive add-on batteries (retailing for $15-20 each) which the Hero 3 White supports but the new Hero does not. The swappable batteries would make up somewhat for the battery drain resulting from the use of the camera with a Wifi connection to my iPhone for accessibility support.

Along with the Wifi connectivity, the Hero 3 White also has support for an HDMI port (for connecting the camera to a HD TV), the ability to connect an external microphone for improved audio (using an adapter), support for higher capacity memory cards (topping out at 64GB as opposed to 32GB with the new Hero) and again, swappable batteries. The Hero 3 White has more customizable time-lapse settings, allowing for intervals from half a second to a full 60 seconds. The new Hero on the other hand is set to a single interval of half a second. Both cameras are very similar in terms of mounting options and underwater performance (with a top depth of 131 feet in each case).

I have had great fun with the Go Pro cameras during the short time I have owned them, and I really think the Hero 3 White will be a better action camera for me than the entry-level Hero (at least until I can get one of the higher priced models like the Go Pro 4 Silver). I end this post with this photo I took with my new GoPro during a recent visit to the beach.